Oxford’s Geneva Bible

Introduction

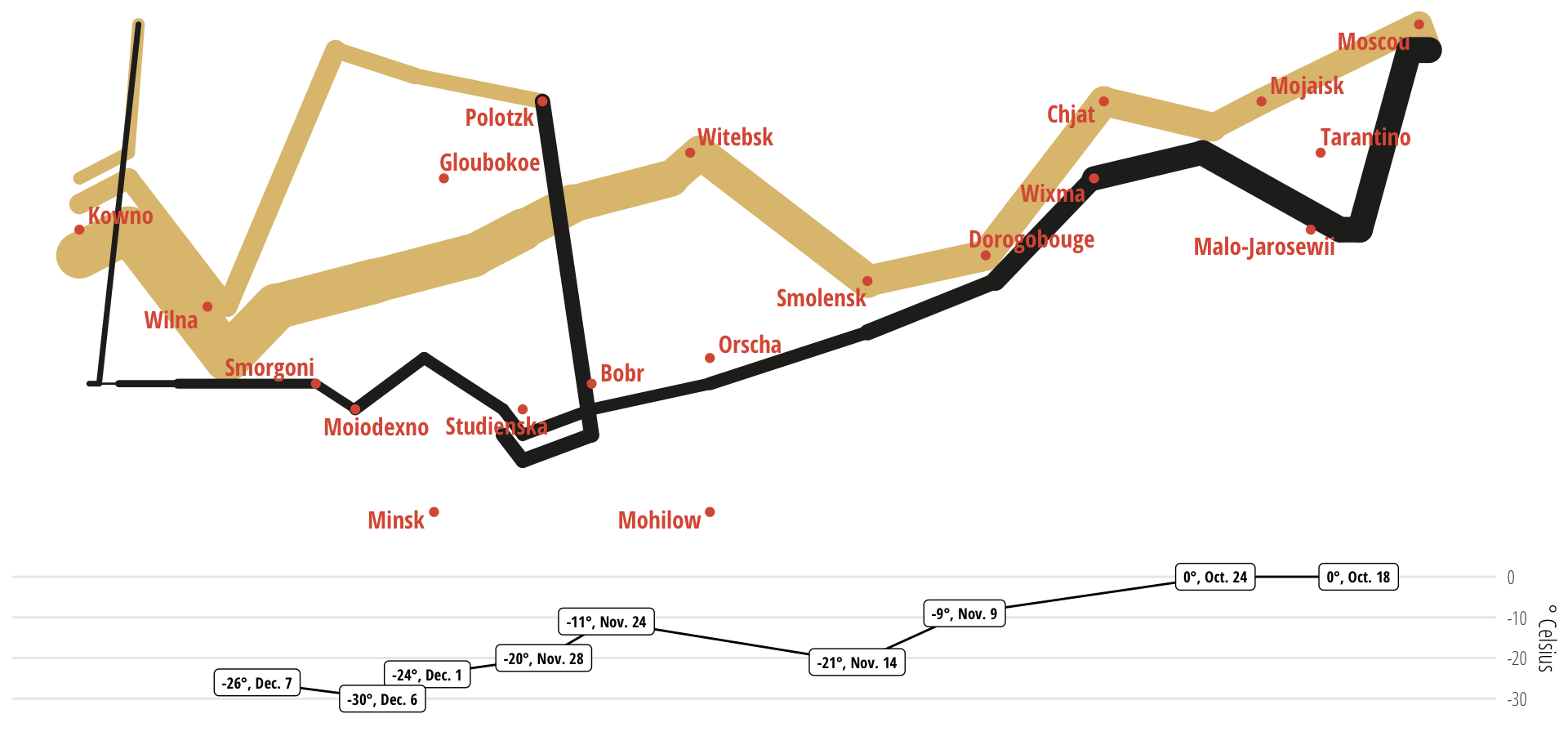

:::{.drop-cap} Minard’s famous 1869 chart of Napoleon’s Russian campaign condensed a military catastrophe into a single image that no prose account could match. By showing six variables simultaneously (army size, location, direction, temperature, time, and the devastating attrition) in a single image it visualised the scale, duration, cause and result of the disaster in a single image.. The most famous false grail in Authorship quests for authenticity must be Roger Stritmatter’s 10-year quest to link a genuine authorship artefact, an annotated copy of the Geneva Bible owned by De Vere, and connect the annotation in its margins to Bible references in Shakespeare.

The first time we tackled his claim that the annotations demonstrated a significant non-random relationship with the canon, we consulted mathematicians and made a plan. We collected the data, defined its boundaries, cleaned its contents, threw out the broken elements and made lots of tables refuting lots of claims that were commonly made by Oxfordians after Stritmatter published his thesis. We used R-Studio to make tables and charts, which all showed the same thing. There is not signficant reltionship wherevever you look. The statistical claims, “the more a passage is references, the more likely it is to be marked,” or “passages referenced six times are 88% likely to be marked”-(correct figure 3,42%) just don’t add up.

Although the bible analysis occupied a large percentage of Oxfraud dataspace, its share of traffic was pitiful compared to favourite doubter topics like Italy or even the better attempts at humour. Instead of promoting debate, the Geneva Bible claims disappeared almost immediately and have not resurfaced. It should have been obvious almost immediately that the relationship was non-existent but with a genuine artefact, Stritmatter soldiered on, published his results and got his doctorate. He now claims that the relationship is (and always was) hermeneutic rather than statistical and centres on three passages. Of course. Why didn’t we spot it?

This time around we’re taking a leaf from Minard’s work, simplifying to the point where you can take in the absence of a relationship in a single glance. Technology has moved on has moved on. AI can sit at your shoulder and fix your plotting mistakes, make suggestions nearly all of which you will reject but which will lead to better ideas, and do things in minutes with data that would have taken days ten years ago—weeks or months back in the 90s. Two years for Minard.

One scatter chart does the whole job.

Beginning at the beginning

| Most popular references | Unmarked (Shaheen) | Marked | % | Stritmatter additions | % | Total | % |

|---|---|---|---|---|---|---|---|

| First 100 | 593 | 14 | 2.36% | 62 | 10.46% | 76 | 12.82% |

| First 1,000 | 2,314 | 93 | 4.02% | 174 | 7.52% | 267 | 11.54% |

| First 2,500 | 3,496 | 93 | 2.66% | 197 | 5.64% | 290 | 8.30% |

| Percentages calculated as a proportion of Shaheen references only. Verses ranked by combined total of all reference types. Figures are now drawn live from the current dataset. | |||||||

The first tabular rendition of the compiled data on marks and references showed exactly where we were going. Strimatter tilted the table by counting all of the marks, except those made in pencil1, as Oxford’s marks. Analysis shows that this is extremely unlikely but does not affect the broad conclusions. We thought we detected three main annotators with different interests and concerns. However, it turned out there was no need to level things up.

Contrary to Stritmatter’s most important claim, that the more a reference is made to a Bible verse, the more likely it is to be marked, the opposite is almost true. The data says 97.64% of the playwright’s 100 most referenced verses are not marked at all before he started weighting up the balance–making additions to his side of the equation by finding more references (always marked) and throwing out a third of the bible as unsuitable for use by playwrights. Even after the additions, the ratio of matched to unmatched references still refuses to suggest anything approaching a convincing overlap. The very strongest Oxfordian goggles and a large amount of context shearing are necessary before even the feeblest of links can be created between the two datasets.

A single chart

This time around we tried to take a leaf from Minard’s work and simplify something visual to the point where you could take in the absence of a relationship in a single glance. Technology has moved on. AI can sit at your shoulder and fix your plotting mistakes, make suggestions and do things in minutes with data that would have taken days ten years ago, weeks or months back in the 90s. Minard must hav needed year working with pen, ink and copper engraving — every revision was enormously costly. We iterated the scatter chart a dozen times this week alone, each iteration taking minutes.

One scatter chart does the job.

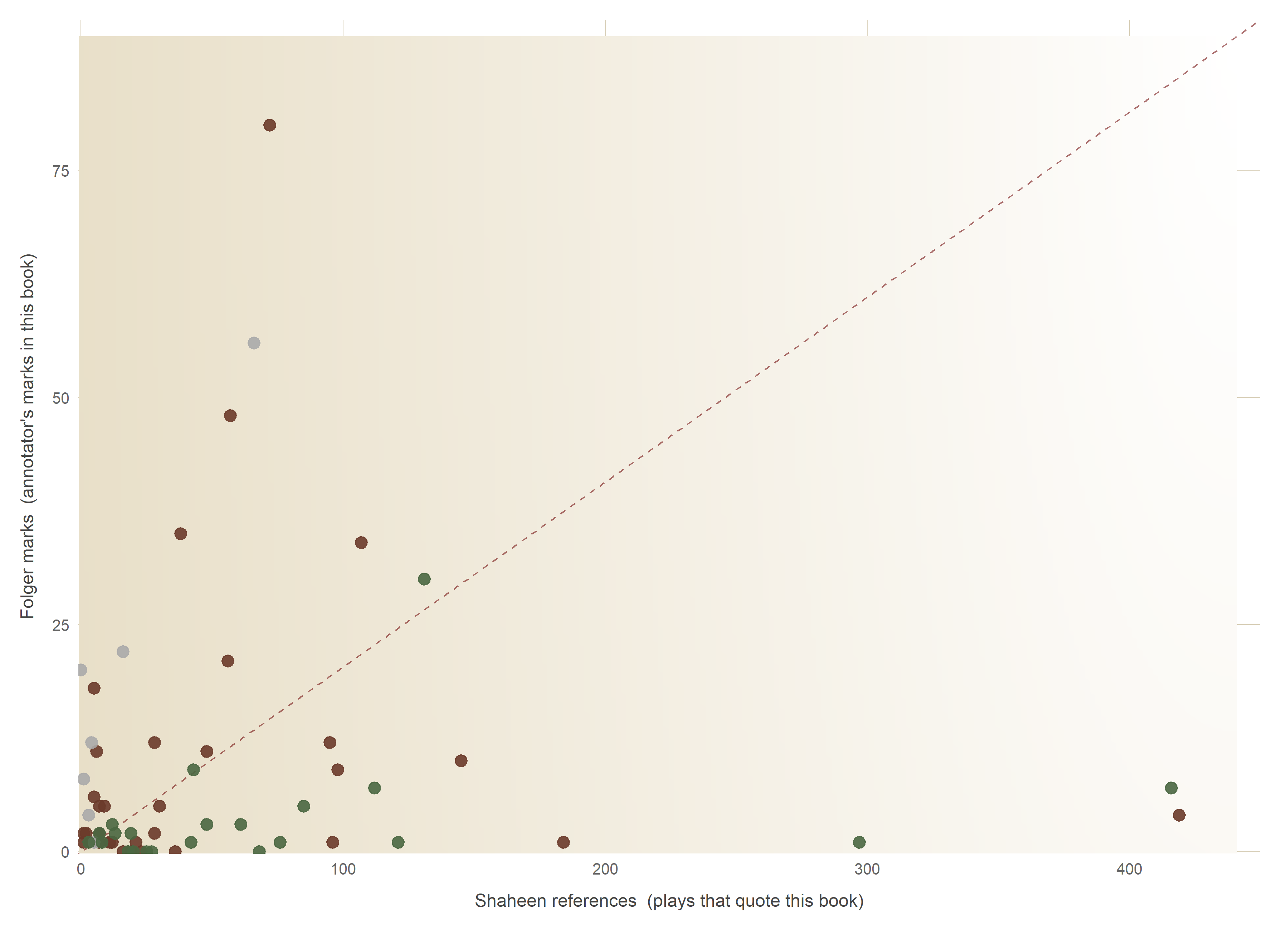

The scatter: marks vs references

Each point represents one Bible book with its collection of marks and references. For there to be any promise of a relationship, the dots should come together toward the top right of the chart, showing a preponderance of shared interest. The chart shows the precise opposite. The area where any relationship should be visible is completely blank — the white hole down which the proposition vanishes. However long you spend studying it, you cannot even begin to imagine a relationship between marks and references that connects the annotators of the Bible to the author of the plays. The data will not help, though we are happy to offer it to anyone who wants to try. Like prisoners, data cannot be forced into telling you what they do not know.

The chart above shows virgin white space, lots of it, where the marks of any relationship between references and annotations would appear, thickly clustering if any authorship conclusions are to be mooted. There’s nothing there. Below there is a live summary table from the dataframe, Again, there’s nothing there. Nothing suggestive, nothing relational, nothing worthy of investigation. Anyone who has worked the data, knows this. It was an obvious hopeless quest, Even Dr Stritmatter knows this. He explained to me, after he looked at the charts, that the relationship between the annotators and the references was hermeneutic in nature, perhaps hoping that I didn’t know what it meant.

Speaking about himself in the third person in the old-fashioned and annoying habit senior academics once had, he tried to make it clear:

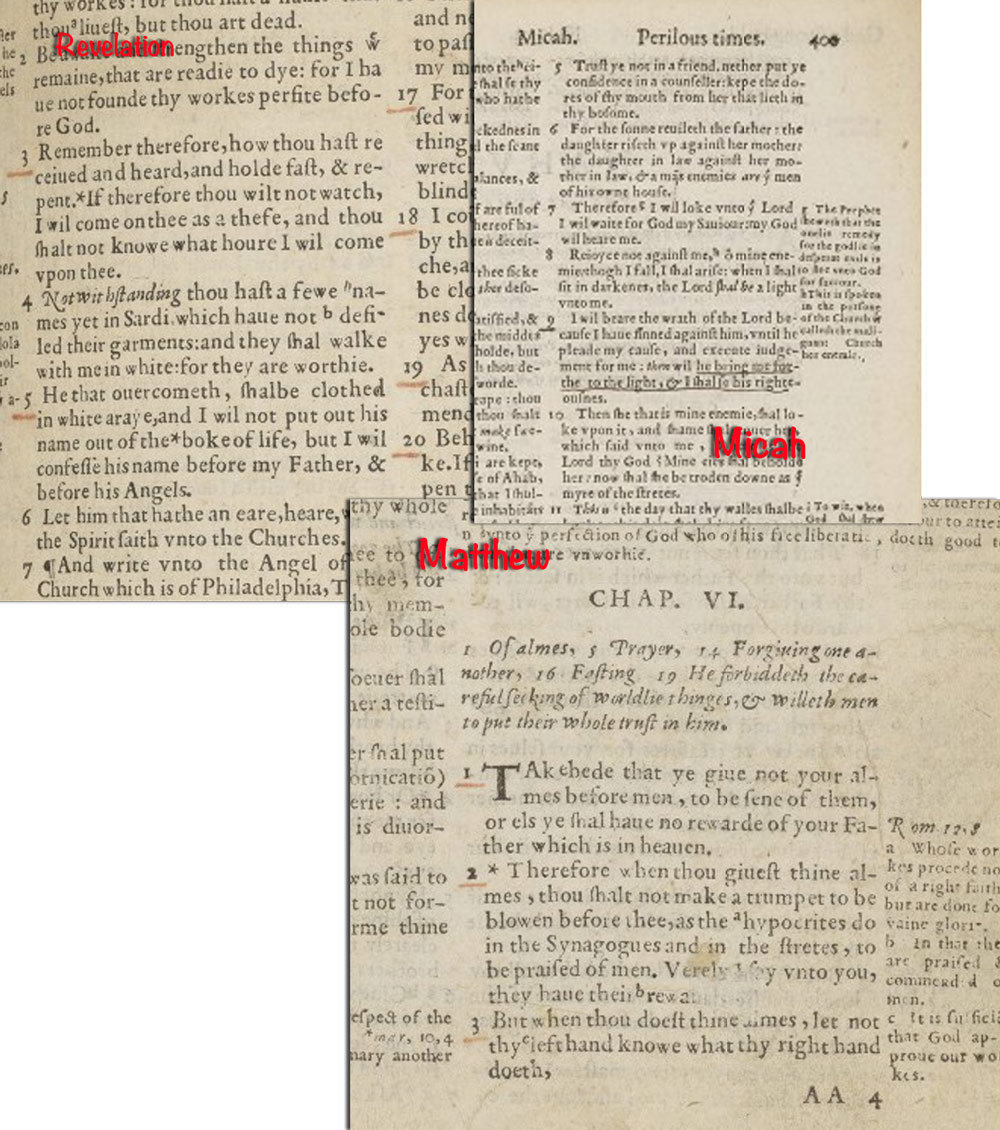

In fact, Stritmatter in his dissertation goes out of his way to make clear that his conclusions do not depend on any statistical operations: “Literary reasoning” is the process of the interpretation of literary texts to form conclusions about their meaning and significance. In literary reasoning, numerical symbols can play a role, but they are never the whole story. They are also not things-in-themselves; they are subordinate to logic and literary inference, to which they contribute when statistically robust. No matter how impressive the number of marked verses which demonstrate an influence in “Shakespeare,” the inner story of these annotations is not told by numbers, but in the brief sequence of marked verses (Micah 7.9, Matthew 6.1-4 and Revelations 3.5: see chapter 26) which comment on the condition of a man whose name has been erased from history and which set forth the divine promise of his eventual redemption. This is a matter of hermeneutics, not calculus.”

This is a matter of allowing a fanciful proposition to occlude judgement from the vrey beginning. Even if the realtionship looked promising, conclusive even, what would Stritmatter have proved? no more than the annotators were very familiar with Shakespeare’s work. Most Londoners were. The authorship needle would not have moved had the results been 10 times or even 50 times more favourable.

In Short

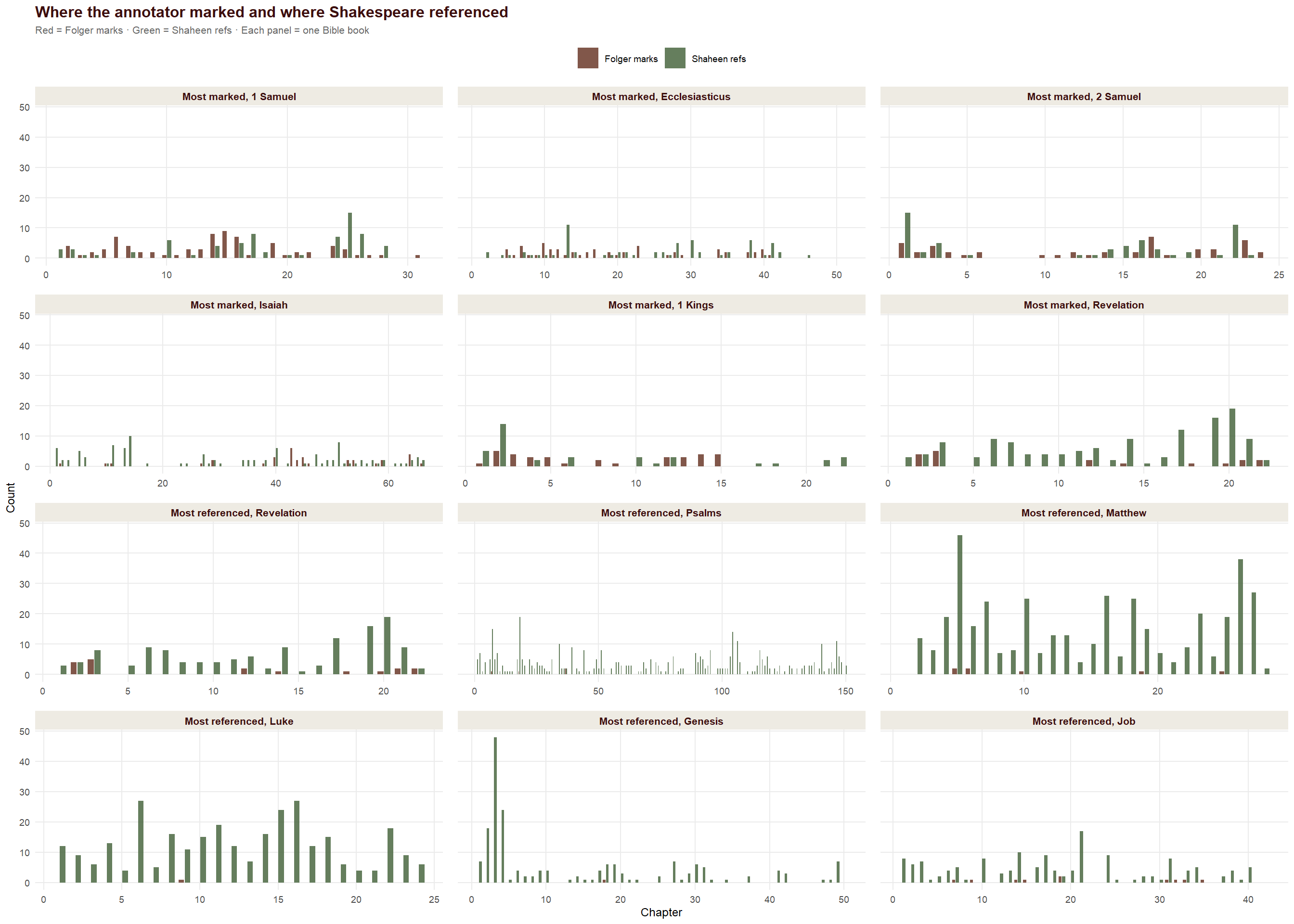

After grinding the numbers, our view, strengthened over time, is that the relationship between marks and references highlights the differences between the annotators and the playwright, rather than the reverse. Even when they both make lots of marks in the same book they rarely have much in common.

The playwright likes the New Testament. The annotators are keener of The Old.

Shakespeare is soaked in references to Genesis, the annotators have marked only one verse, which the playwright ignored. There is nothing approaching statistical support for the idea that the marks are made by the playwright. In fact, there’s nothing to support the idea that the 17th Earl of Oxford was responsible for all or even any of the annotations. The whole idea is nothing more than another Oxfordian mirage.

Chart 2 — The dashboard: chapter by chapter

Text about the dashboard goes here.

The complete data: all Bible books

Text about the sortable table goes here.

Footnotes

Nobody has found a lead pencil made earlier than 1630. Yet.↩︎